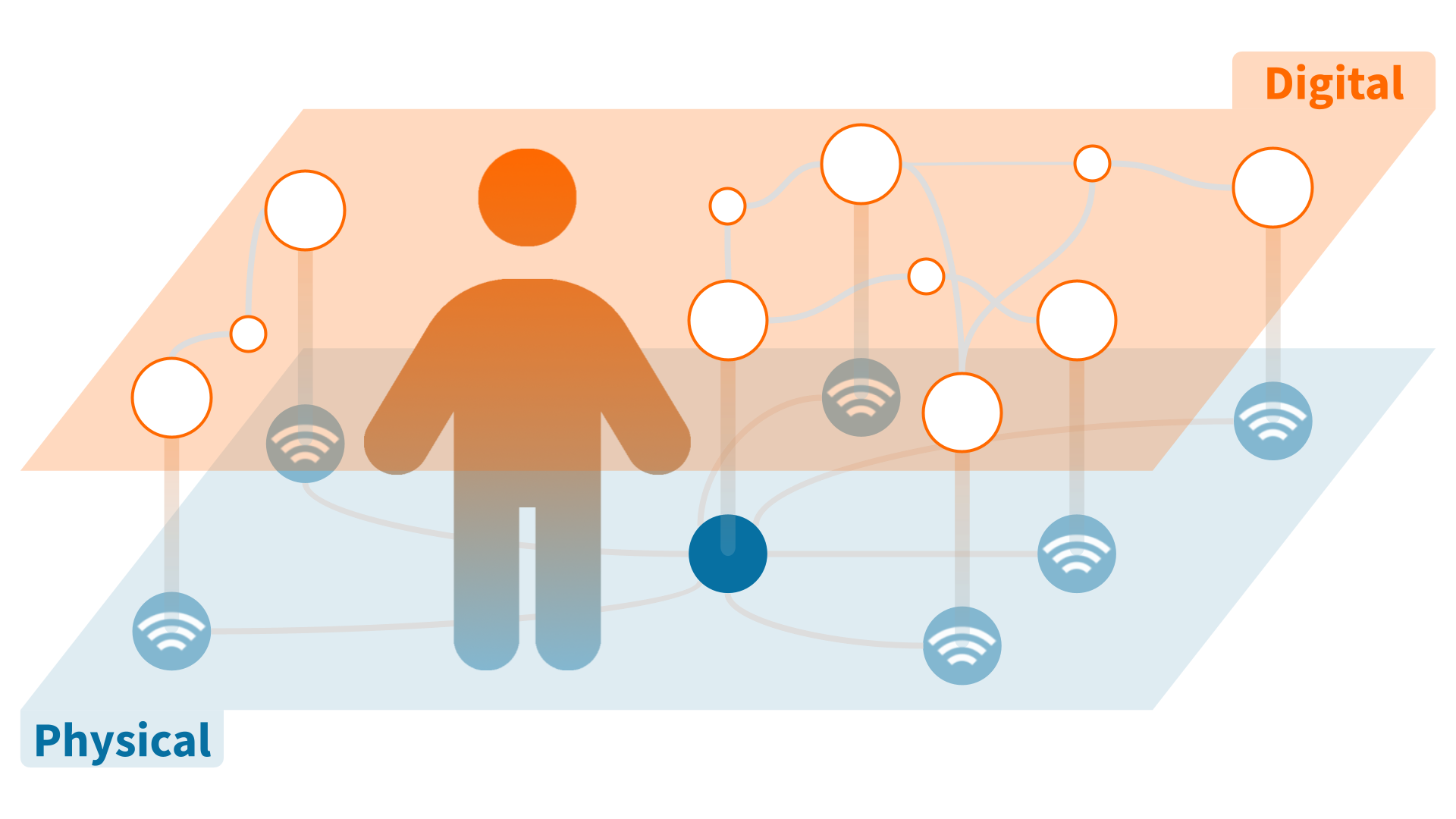

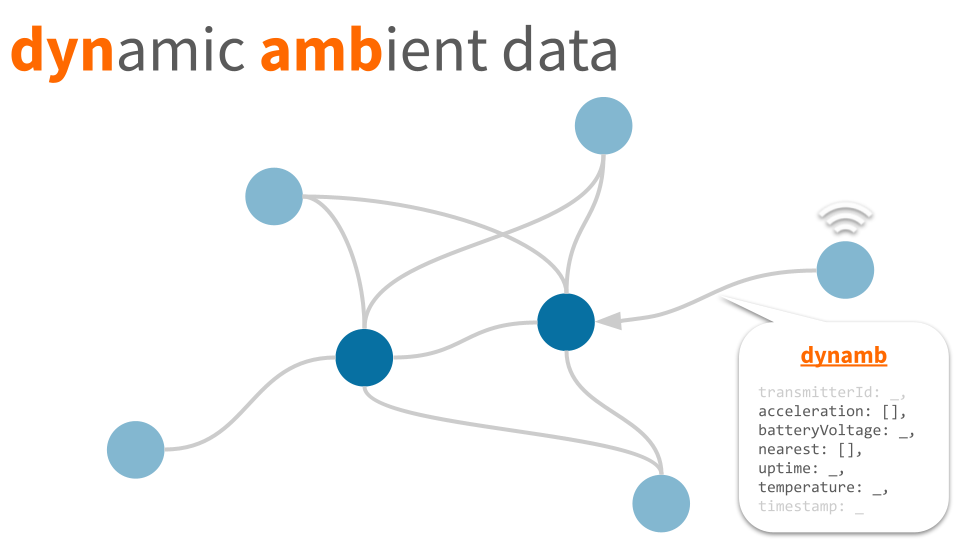

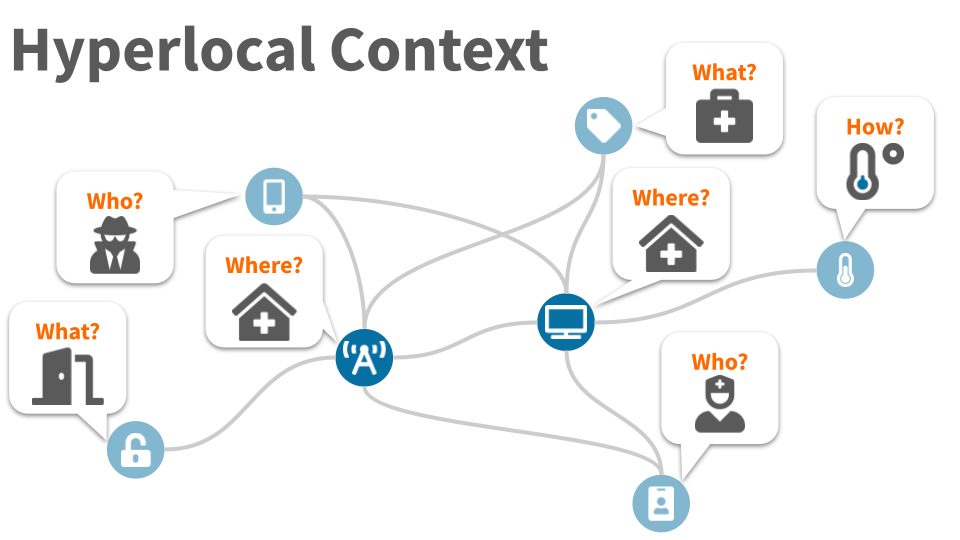

A digital snapshot of who/what is where/how,

Hyperlocal Context is a machine-readable,

real-time contextual representation

of a physical space and its occupants.

Hyperlocal Context is a graph

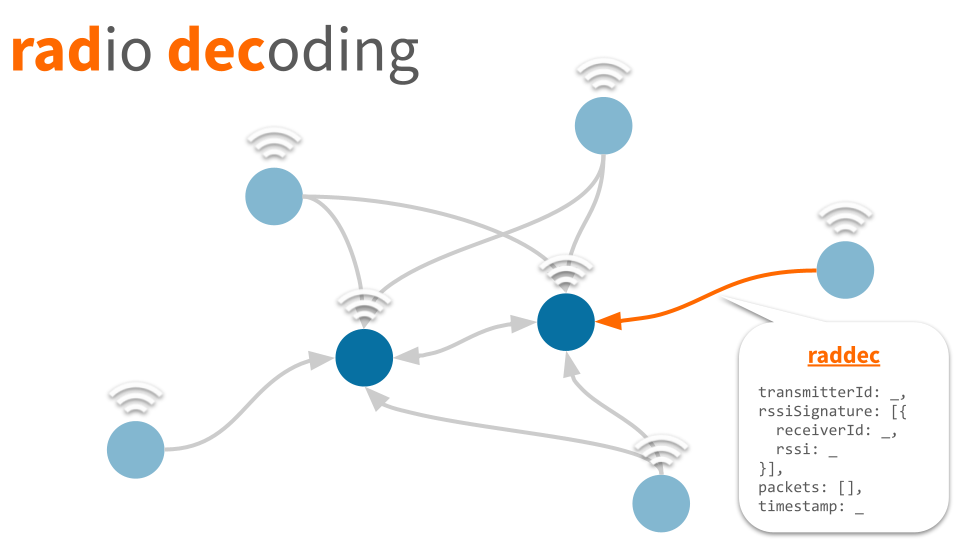

Every thing is connected by physical proximity

- nodes are radio-identifiable devices (i.e. physical things)

- edges are radio decodings (i.e. proximity)

- each node may include hyperlinks to additional data (i.e. digital twins)

Hyperlocal Context is JSON

The graph is represented as a list of devices

"devices": {

"{id}/{type}": { ... },

"{id}/{type}": {

"nearest": [ ... ],

"dynamb": { ... },

"statid": { ... },

"url": "https://...",

"tags": [ ... ],

"directory": "a:b:c",

"position": [ x, y, z ]

}

"{id}/{type}": { ... }

}

Ordered list of peer devices by proximity, as estimated by signal strength ( rssi), represented as an array of objects, each with the following structure:

{

"device": "{id}/{type}",

"rssi": -72

}

Each item in the list represents an edge in the graph, connecting device{id}/{type} to device{id}/{type} in the list of devices.

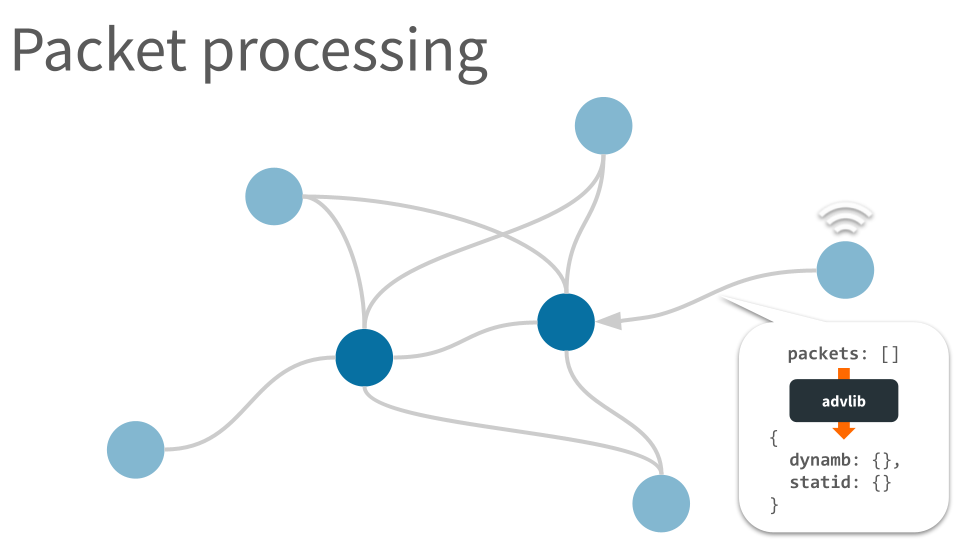

- where

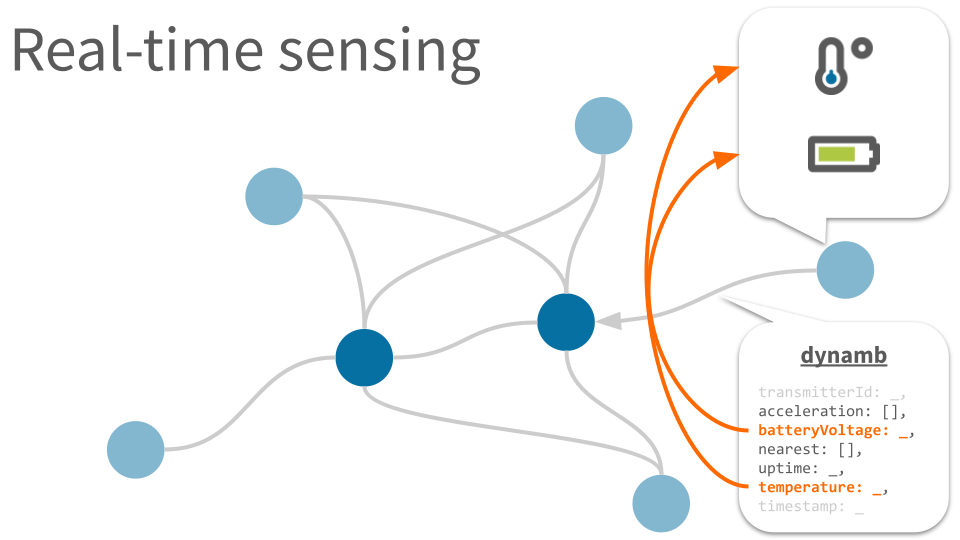

dynamic ambient data represented as standard properties* including:

| batteryPercentage | |

| heartRate | |

| illuminance | |

| isButtonPressed | |

| temperature |

- how

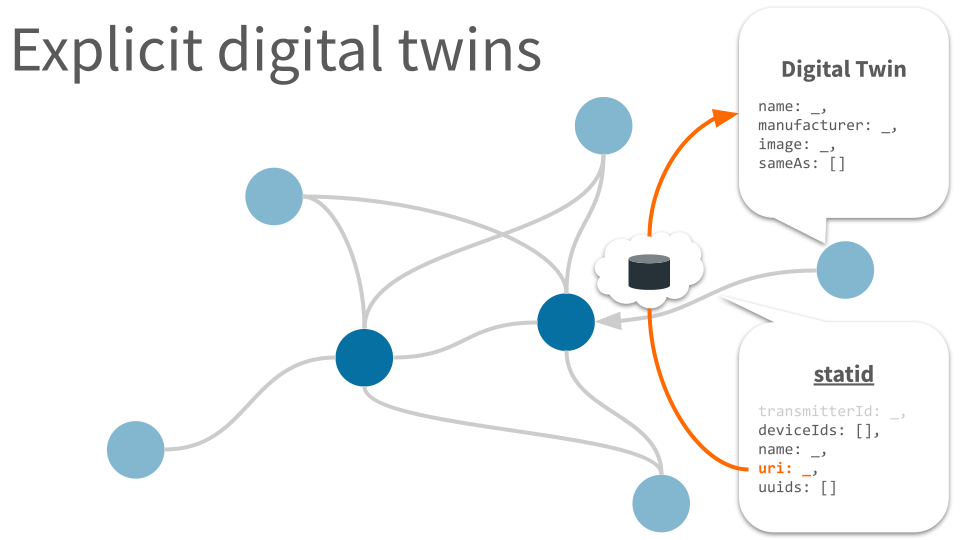

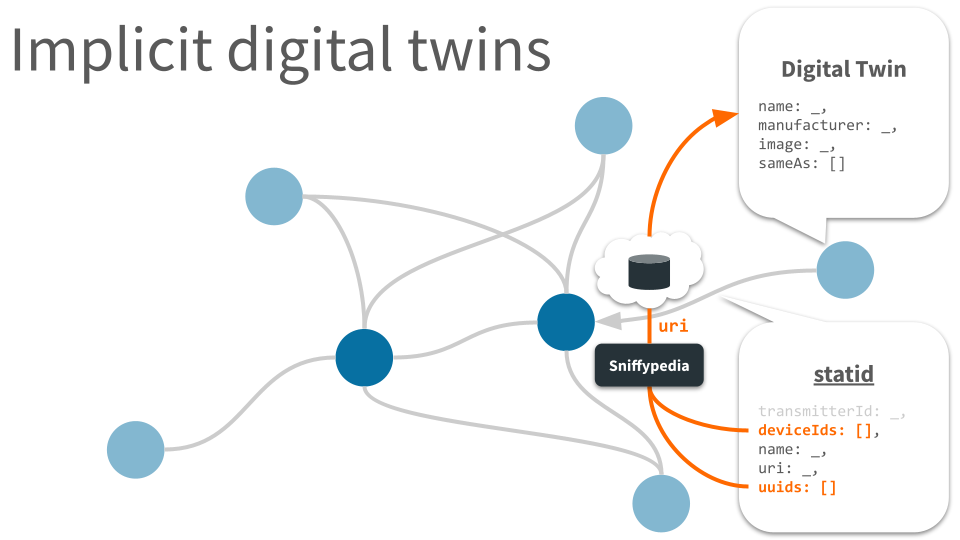

static identifier data represented as standard properties* including:

| appearance | |

| deviceIds | |

| name | |

| uri | |

| uuids |

- what

Hyperlink to a digital twin, expected to be represented in the form of JSON-LD & Schema.org.

The url is either retrieved by looking up statid identifiers in the Sniffypedia index, or associated with the specific device by chickadee.

- what

- who

- where

List of semantic tags associated with the device, represented as an array of strings.

The tags are associated with the specific device by chickadee.

- what

- who

- where

Hierarchical semantic description of location, represented as a colon-separated string.

The directory is associated with the specific device by chickadee.

- where

Developers, developers, developers!

Our cheatsheet details the underlying data structures.

Hyperlocal Context is IoT

If the Internet of Things (IoT) is defined as:

enabl[ing] computers to observe, identify and understand the world—without the limitations of human-entered data.

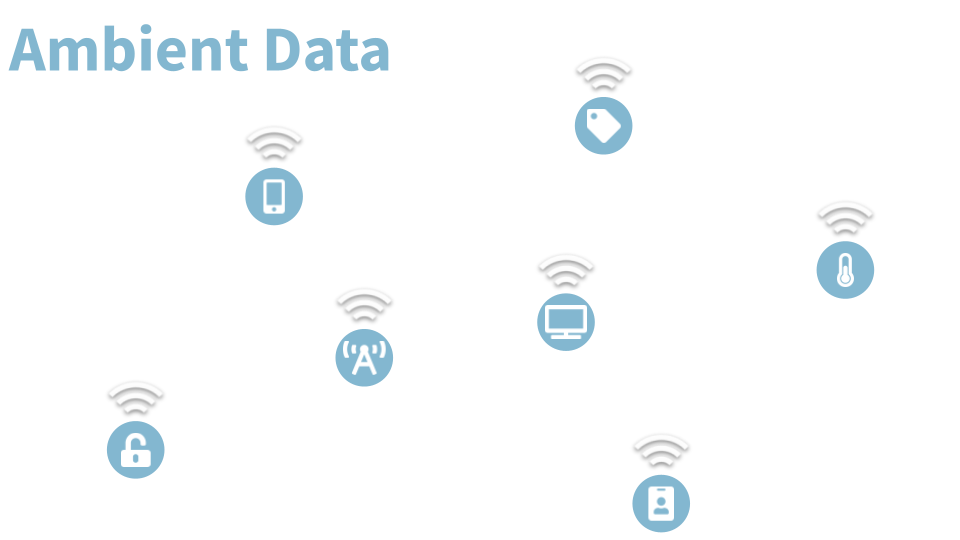

then hyperlocal context is a web-standard means for computers to represent and exchange their understanding of the physical world. Hyperlocal context is derived by processing a real-time stream of ambient data.

Hyperlocal Context is published

Towards collective hyperlocal contextual awareness among heterogeneous RFID systems

Jeffrey Dungen, Juan Pinazo (reelyActive)

Hyperlocal Context to Facilitate an Internet of Things Understanding of the World

Jeffrey Dungen, Pier-Olivier Genest (reelyActive)

Hyperlocal Context is open source

Pareto Anywhere

The open source IoT middleware enabling context-aware physical spaces.